Do you want to build or work for a data product company; specially if you are a data engineer trying to build spark muscles to do the heavy lifting of giant magnitudes. For that you need to understand how these companies optimize for the large cost of extracting information from data.

What go wrong with a house by the lake right??? Imagine if you live there alone and the fishes keep multiplying, you don’t own any fishing equipment that scales, that leads to the lake getting polluted and instead of getting returns from your fishes you end up paying a higher cost to the services company that will fix the lake for you.

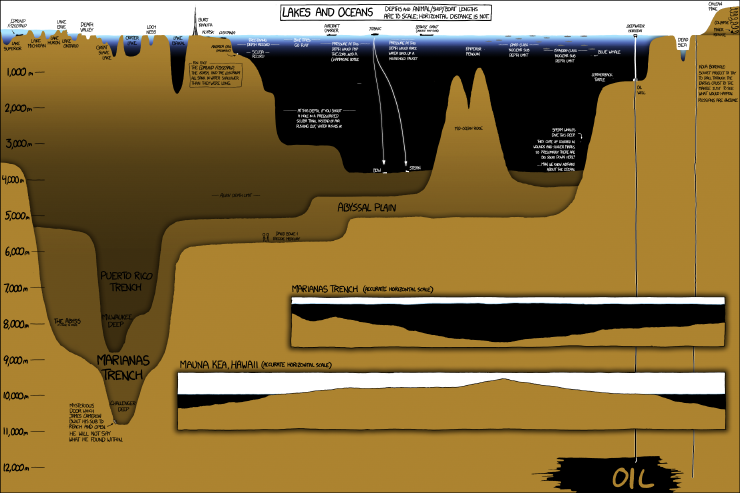

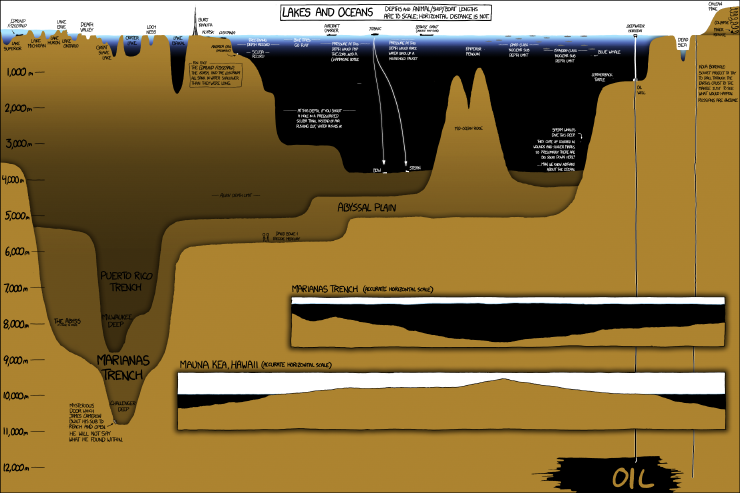

https://images.app.goo.gl/baa5iPg1gbM4B85B7

CDC is the idea that when relational or transactional tables are modified, you emit an update stream. This enables you to keep copies in sync by capturing changes to tables as they happen.

Although CDC has many advantages, there are also some problems that make it difficult:

- Lower latency means more work

- Write amplification - the work necessary to balance the trade-offs between efficiency at write time and efficiency at read time

- Batch writes with double update and possible inconsistency

- Read requirements with the different types of deletes in a table

Hidden cost in data lakes:

With the shift that came in 2015, when AWS release S3, data lakes become an affordable data warehousing alternative. The economics that goes behind budgeting decisions, that affect data architecture, depends on platform expectations and impact on revenue of the organization. However, business analytics will never understand any excuse for stale data. Urgency in data retrieval requires fast computations that have implied high cost.

When we store data in a file format like CSV or parquet in cloud storage, it needs a catalogs to persist the address of all files that represent a table and a query engine to make the data accessible. Please read cost modeling guide on data lakes by AWS here.

Table maintenance and recovery time

It helps to have a local first approach to learn a concept. So lets try to run everything locally and replicate issues.

Every data pipeline can we expressed with a source, pipe and sink.

<aside> 💡 How many x rows, y columns and z chars make a GB of data?

</aside>